Calibration of thermocouples in the DAQ Assistant, using data from spreadsheet?

Hi all

For my first application LabVIEW, I'm looking to automate the calibration of thermocouples by measuring their response at different temperatures in a dry well Sizer. I get temperatures of thermocouples six by SCXI 1303/1102/1600 and have six channels put in place in one of my subVIs in the DAQ assistant.

I compare these values to temperature calibrator that I am acquiring by VISA series in an other Subvi. All these values are written in a .csv file.

Can I import these data into the DAQ Assistant to use for calibration? Is there a simpler way to associate with the channel calibration data? Currently, I could manually copy - paste the cells on the worksheet in the calibration sheet, but that seems just silly.

If there is everything that I could provide to help solve the problem, let me know!

Thank you!

Hi Zoysiamo,

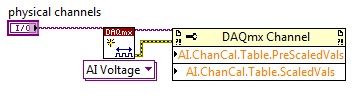

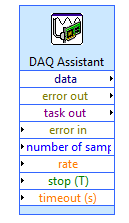

It is possible to automate the calibration screws DAQmx at a lower level, in particular the DAQmx channel property node. Using you can specify advance nationwide and the values on the scale for your channel. I recommend you take a look at this example of the community. The property node configuration will be similar to, as illustrated below:

Tags: NI Hardware

Similar Questions

-

How to create different types of analog inputs without using the DAQ assistant?

Hi all

I would like to create multiple entries multiple analog channels of type... I mean I want to have the voltage of 5 and 2 channels of temperature...

However, I am not using the DAQ assistant. I use "create channel" vi.

Can anyone suggest me please how to do / I submit my VI for reference... I have 5 tensions, and 2 temperature characterized as showing these 2 two separate graphics...

-

Using the DAQ assistant voltage vs time graph

I'm relatively new to all Labview and terms and everything which affects programming. I've read tutorials and everything trying to understand things. One thing that I have a problem is the DAQ assistant. Now, if I wanted to place the DAQ assistant on the block diagram of labview and I have everything set up so that the voltage will travel in the DAQ hardware, how would I set up my block diagram so that I can get a graph of voltage vs time in which data begin recording until the voltage reaches a certain tension I was inputing and change such as 30 or 40 volts. The data will also stop recording when the voltage reaches the same number. I also want to be able to multiply the number of voltage coming out a number that I can change myself before it is graphed over time. Example, I mean the voltage to start recording when he reached 40 volts. Now when the voltage comes out of allows it to DAQ assistant say he is somewhere read 10 volts and the number I want to multiply by 5. So, I want to be able to multiply the voltage by 5 and then since it will be 50, it would begin graphing this number over time.

You would need to have a Boolean value which controls whether the (amplified) voltage is greater than N.

If so, he would send this value to a graph, if not, the tension would not get graphically.

Here is an example: (do not try to copy this code exactly, because it does not use a signal, but rather a whole number that is being created)

-

Configuration of 1120D SCXI using the DAQ assistant

Hello

I tried to set up a channel on a module SCXI in 1120 D, as well as the rest of the SCXI system, using the DAQ assistant, but ran into a problem related to the range of the signal.

According to this, http://sine.ni.com/nips/cds/view/p/lang/en/nid/1658, the maximum voltage is located between 10v - 10v, but when I tried to configure a channel with a range of 0v - 10v, it displays an error indicating that the range of the signal was invalid and that the available range is - 0.005v - 0.005v, could someone suggest what I'm missing or what I need to do to set up?

Thank you

Chris

Hi Chris,

Thank you for your message.

Can I ask that you have the software gain set on the device? The table on page A-5 manual found here your device that the gain settings must be for some input range.

I imagine you have the gain set to 100 while the available range is ±50 mV. For - 10V - 10V, please adjust the gain to 0.5.

Thank you very much

-

Is the DAQ Assistant compatible with LabView7?

I am equipped with a LabView7 and a NI DAQ - 6015 Pad and am trying to monitor the temperature with two thermocouples. I searched some tutorials online, but most suggest using assistant DAQ, which is not listed in the section of my functions palette entry. I have downloaded the NOR-most recent DAQmx driver who is supposed to be compatible with LabView 7.x. I don't know if this means that it is compatible with LabView 7.0. I still do not see the DAQ assistant appear.

Any help or suggestion (about DAQ assistant or how to program in LabView7 to solve my problem) is greatly appreciated!

Thank you.

It looks like the DAQmx latest version is 8.1 which will work with LV 7.0

See here http://digital.ni.com/public.nsf/allkb/97D574BB1D1EEC918625708100596848

-

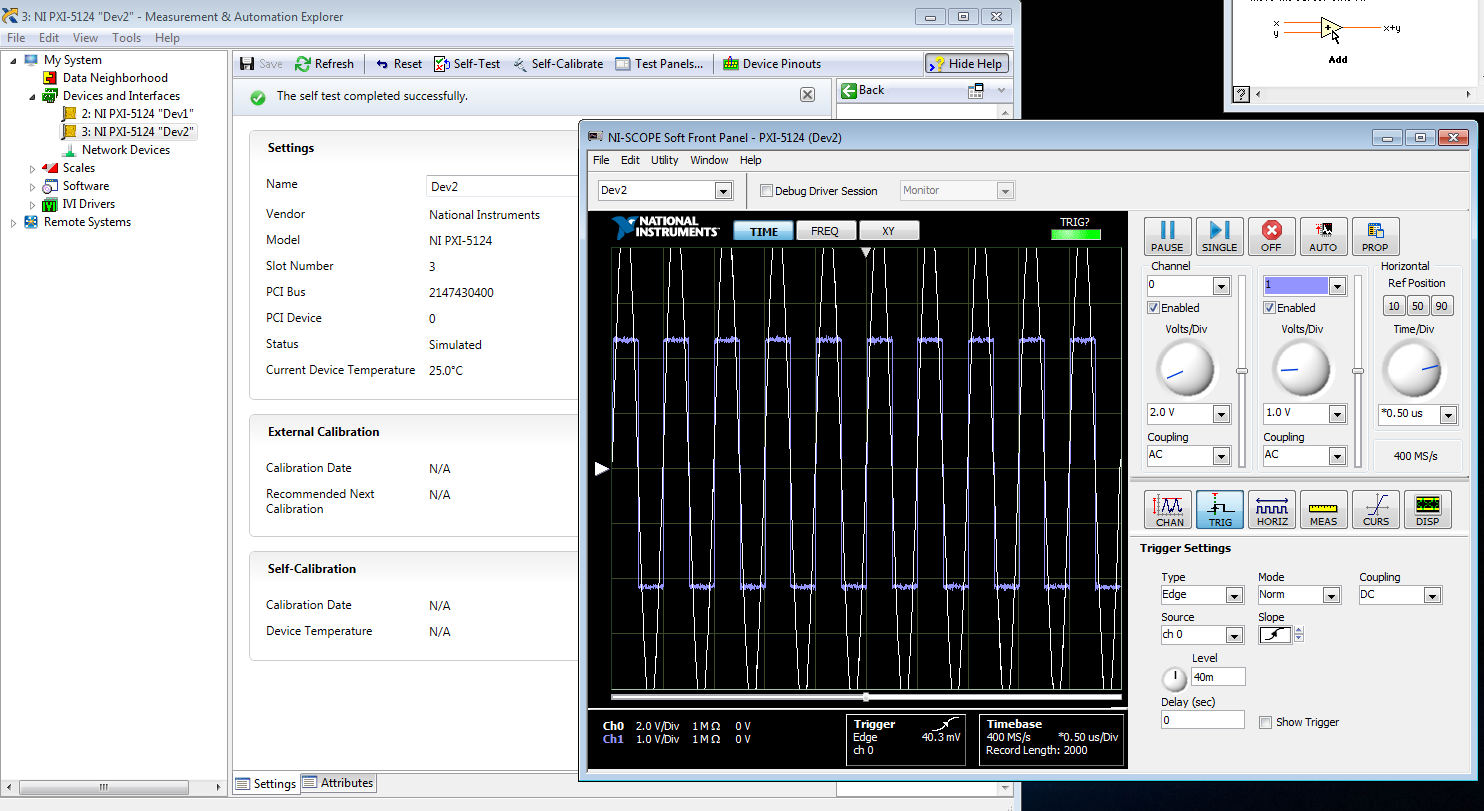

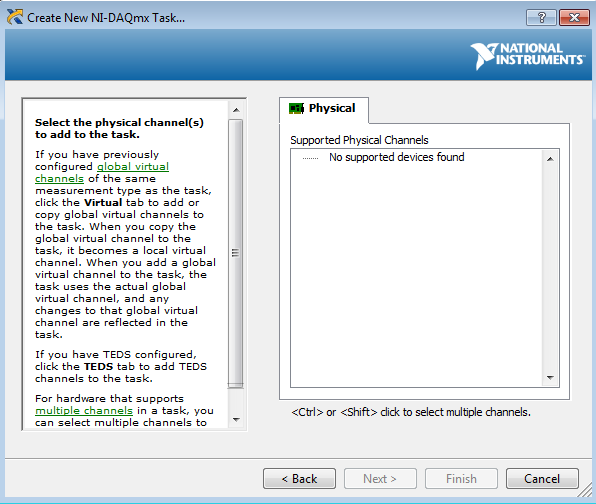

I am trying to create a development machine, where we can test the new code without using our physical hardware. I followed this guide to set up a system of simulation. I get to step 3.2 b, but the device does not appear in the DAQ assistant. MAX, the device self test and gites calibrated successfully, and when I open the test panels, I see some sort of signal. I guess that's a default entry simulated since I didn't that device to look for anything? Note that two devices, I am creating the show upward into the devices section and Interfaces, but that, even after running auto calibrate, automatic Calibration date is not yet specified.

When I try to test the device and create a voltage according to the guide, I can't see a device in the creator of data acquisition task.

Steps 1 and 2 of this guide are of course met. Step 3 is not, but this is not surprising because a simulated device is in device in any case manager. Also, I'm not under RT, so step 4 is satisfied.

Someone at - it ideas?

That would be because the PXI-5124 is a digitizer not an analog input device. You must use the NI SCOPE not NOR DAQmx driver

-

201003-error occurred in the DAQ Assistant

Hello. I use "cDAQ-9178" and "NI 9215" and "NEITHER 9402" are added on. "

However, when I run Labview code, "Error-201003" occurs.

{

Device not available. Possible causes:

Device is no longer present in the system / device is not powered.

Device is turned on, but was temporarily without electricity / device is damaged

}

(Error appears as the 1st and 2nd figures below).

(Plans of logic is the figure below).

Thank you.

I could be something with the pilot

Check this box:

Error 201003 to the MAX test panel or all by running the DAQ Assistant

http://digital.NI.com/public.nsf/allkb/5413F392D88326148625746B006745C5

In this forum, they speak the same error:

Spontaneous error code 201003 for acquisition of data PCI configuration

http://forums.NI.com/T5/SignalExpress/spontaneous-error-code-201003-for-PCI-DAQ-Setup/TD-p/830707

-

Simulate signals wired to the DAQ assistant for USB-6009 device

Hello

I'm trying to send a signal to the DAQ Assistant Express VI. I watched the movie "Generating a Signal" on the Web site of NOR (www.ni.com/academic/students/learnlabview/generate.htm) and I have my Signal simulate connected directly on the DAQ Assistant, as shown in this film. In my case, the DAQ Assistant sends the signal to a device USB-6009.

However, I received this message:

Error-200077 occurred to the DAQ Assistant

Possible reasons:Requested value is not supported for this property value. The value of the property may be invalid because it is in conflict with another property.

Property: SampTimingType

asked the value: Sample clock

You select: On-demandIf I select 'On Demand' in my DAQ assistant and run the vi everything works beautifully. However, I need my DAQ assistant to be configured to generate a waveform AC continuous, not output a single alternating current rippling.

What happens here? I did not have this problem before on other devices of NOR. I am using LABView 2010.

Please answer.

Thank you.

-

Multiple entries to the DAQ Assistant

Hello

I'm doing my DAQ Assistant, in several (formed of an array) Boolean inputs where there is 1 digital output. (see attached software folder)

Physically, I want a valve to open and close at a certain pace, where the user can install/control this pattern until the program starts.

I think that the best way to do it is to have multiple Boolean values that the user can press or unpress.

Before that, I started, I tried with only Boolean 1 where it worked perfectly.

As seen on the attachment (error), it is possible to an easy problem to solve, but I just can't figure it out, I'm stuck at my already made solution.

I use USB6008.

I hope that there is a gentle soul who can help out me.

Best regards

Kenneth G. Vejen

Hi Kenneth.

When the output to the generation mode is set to "sample 1", which means that whenever you call the DAQ Assistant will generate 1 sample. In order to generate 5 samples, you must therefore call 5 times.

I have attached a modified version of your VI, which shows a way to archive it. However, be aware that the samples will be generated fast and not at 100 ms note your loop runs. It depends on your application, if it is as you want samples to be issued.

-

I've just updated LV 2009 SP1 LV 2010. I use a LV 32-bit on a 64-bit computer.

When I open the DAQ Assistant, I get a pop up window that says "LabVIEW: an exception occurred in the external code that is called by a function of the call library node." This could have corrupted memory of LabVIEW. Save all work to a new location and restart LabVIEW. VI "Advanced Timing.vi:1" was arrested in node "" a call to "get of DAQmxAssitant_DAQmx IO Info.vi of control.

If I hit OK, DAQ Assistant is locked up, if I use the Task Manager to close the LabVIEW vi breaks down.

I already reinstalled 9.5.1 DAQ device drive. without success.

There is no such version. The most recent is 9.2.2.

-

Units of the number of samples and rates for the DAQ Assistant units

Hello

I use the DAQ assistant for analog voltage of an input OR data acquisition card. What is the difference between the rate and the number of samples in the DAQ assistant and what are the units of the two?

Thank you.

The number of samples is how many discrete to measures. Rate (per second) is how fast to acquire the specified number of samples.

If number of samples is 100 and the rate is 1000 samples per second, then the acquisition would take 0.1 second (100 / 1000).

-AK2DM

-

Hi all

This should be a pretty simple question, but I can't seem to find the answer online and currently do not have the functionality to test this:

I'm using LabVIEW 8.5 and have a VI that imports data from sensor through the DAQ Assistant. In the configuration tab, there is a range of signal input. What happens if my sensor exceeds this range? I get a warning? The default value is the maximum (or minimum)? I was interested in writing a code to display an error that I approach the limits of this range, but did not know if I also need to include code to display an error if the scope is exceeded as well.

Thanks for the help,

Tristan

Hello, Tristan,.

The behavior depends on the selected range and the device you are using.

If you are using a device with a single input range is valid, we will use this range, even if you set a smaller minimum and maximum in the DAQ Assistant. So, if your device only supports ±10V and you set the range to ±8V, you will still continue to get valid data after your top sensor 8V until what you approach 10V. When you reach the limit of the extent of your device, the output will be 'rail', and simply return the maximum value until the signal is less than the maximum value again.

Note: A device that is nominally ±10V usually has a go-around (such as ±10.2V) which are usually specced in the manual.

However, if you use a device with several ranges of entry then things become more complex.

NOR-DAQmx player will choose the smallest range that entirely covers the interval you choose. For example, suppose that your device supports the following input range: ±0.2V, ±1, ±5V, ±10V and you choose 0V - 3V as the range in the DAQ assistant. The NOR-DAQmx driver will focus on the input range and the list of the entry lines that your hardware supports and choose the smallest encompassing the entire range that you set. This would be the ±5V, because this is the only beach that contains up to 3V. Thus, all between ±5V input signal is returned and none outside this range will be 'rail' to the maximum or minimum value.

We do this because using small beaches make more efficient use of the resolution of the ADC. So, we try to use the most effective range based on what you ask without picking up a range that will make you miss data.

Let me know if I can clarify it more.

-

Precise triggering voltage input and output generation in the DAQ Assistant

Hello

I wonder if anyone has come across a simular problem with the synchronization of input and output voltage. I use a box 11 LabView and NI USB-6259. I have been using the DAQ Assistant to configure the input and output channel. In particular, my task is to generate a single rectangular "pulse" as the output voltage to drive a coil and once the pulse went to get a signal from a sensor of magnetic field and get a power spectrum. This means that the order and the time during which the DAQ Assistant is used is extremely important. For example, the output voltage channel must be opened first for 2 seconds. Subsequently, the channel of input voltage must be open for 1 second, in which the sensor signal is obtained and post-processed. Only after these tasks are performed in this order he can can be repeated in a loop until the experiment is over. I don't know how to trigger data acquisition assistants (one for entry) and the other for the voltage output correctly. Y at - it a trick?

See you soon

Michael

Hi Dave,.

Thank you that I wired the error strings but the timing issue was unrelated to it. In the DAQ assistant, I simply had to choose the continuous aquistion of the 'samples' methods 'N-switch' for input and output voltage and all works fine now.

Thanks again

Michael

-

New Daq with the Daq Assistant in the filtering code

Hei,

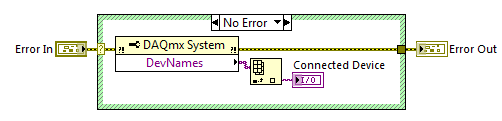

I have a NI USB-6225 DaqMx I used a couple of years. When I started with LabVIEW, I found the Daq Assistant to the best way to measure the voltage with my Daq etc. My company has purchased another DaqMx NI USB-6225 and now I have a big problem: the Daq Assistant in my old Vi does not work with the new data acquisition. I understand why there is this problem, but I do not know how to solve. I found this code on the forum who finds that Daq is connected:

The problem is that Daq Assistant do not have an entry for it, and it gives me an error if I try to run the code with a different device than the original, I used when I created the code.

Is there a way to solve this, so I don't have to convert all the Assistants Daq normal code?

Hello again,

two options:

(1) as the old software is related to 'Dev1' you must rename your new device to this alias and skip/rename the old device (and lack).

(2) rewrite your old software does not become is not dependent on the name of the alias for the data acquisition card...

It's your choice!

-

Switch between the graph and the table as in the DAQ Assistant

I was wondering if it is possible or if anyone has any success making a user interface, such as the DAQ Assistant, where you can switch between a graphical output or an array of the recorded signals. I would like to have this feature in my code .VI. From now on, I'm just a waveform graph in my front panel. I'd love to be able to alternate between this waverform table and a table of output values. I have attached a few screenshots to explain what I mean.

Hello

Personally, I like the TAB ideas that have been validated, but you can also use property nodes and the visible value.

Good luck.

Steven

Maybe you are looking for

-

Data of faces are not not in the Photos

Hi everyone - I just discovered a big problem with the Photos this morning and it makes me a little nervous. For some reason, I am unable to use my existing tags of the faces in the Photos. I have hundreds of faces marked in thousands of pictures and

-

Hello world. I have a problem with mySpeedy33. Why can't hear my recorded voice back which I recordedThrough an external microphone (which is with a headset) that isconnected to the audio in port. However, I can listen to the recordedthe voice so I r

-

My a6244n, running on Vista, cannot completely shutdown, demanding that I hold the power for a few seconds. This is normal. How can restore a normal stay?

-

Unknown programs launched at Windows startup. Slow down the computer.

original post: WHAT ARE the PROGRAMS of NAN IT SAYS NAN PROGRAMS ARE LOADING AT STARTUP. SLOWING TO THE COMPUTOR

-

Officjet 100 L411a: L411a restart problem

I have charged my L411a and used the default pairing code 000000. Everything works fine until I closed my laptop. When I try to print after you restart just find the 'pending' removal of the document and try to print again does not help. If I remove