Precise triggering voltage input and output generation in the DAQ Assistant

Hello

I wonder if anyone has come across a simular problem with the synchronization of input and output voltage. I use a box 11 LabView and NI USB-6259. I have been using the DAQ Assistant to configure the input and output channel. In particular, my task is to generate a single rectangular "pulse" as the output voltage to drive a coil and once the pulse went to get a signal from a sensor of magnetic field and get a power spectrum. This means that the order and the time during which the DAQ Assistant is used is extremely important. For example, the output voltage channel must be opened first for 2 seconds. Subsequently, the channel of input voltage must be open for 1 second, in which the sensor signal is obtained and post-processed. Only after these tasks are performed in this order he can can be repeated in a loop until the experiment is over. I don't know how to trigger data acquisition assistants (one for entry) and the other for the voltage output correctly. Y at - it a trick?

See you soon

Michael

Hi Dave,.

Thank you that I wired the error strings but the timing issue was unrelated to it. In the DAQ assistant, I simply had to choose the continuous aquistion of the 'samples' methods 'N-switch' for input and output voltage and all works fine now.

Thanks again

Michael

Tags: NI Software

Similar Questions

-

USB & Firewire audio interface ports still work as input and output?

I guess everyone has to start somewhere, even if it is borne by almost everyone I would like to know the answer to my questions, perhaps with good reason, what I don't know is so if the USB ports unique connection and firewire on an audio interface function ALWAYS both as input AND output. In other words, whenever I read the information about the product on audio interfaces, no matter where I go, it is generally accepted that most people buy their audio interface for RECORDING. And so when most people talk about connecting their Apple computer, iMac or MacBook Pro, it is generally accepted, they turn to the USB as INPUT. That's all very well and good. But in my case, I want to use the USB port as output (not the taken mini) and go into an audio interface that gives me as a symmetric output signal that I can plug my amplified studio monitor (which has only a balanced XLR input). All of the examples I see with audio interfaces address registration and involve the use of the USB on the audio input interface.

So my question is: can one USB port I see on any number of audio interfaces always function both of the inputs and outputs? It takes, but if so, why does any site mention this fact and whey didn't they show in all the diagrams of the audio interface manual hook to studio monitors? I know what may be obvious to some, but as a user with the intention not to use a piano for a scene while but rather a keyboard/MIDI controller that is attached to the iMac to be able to use the virtual instrument software, I need to go to the controller to the iMac, then the iMac in symmetrical powered monitors. Do the balanced inputs of speakers requires more than a simple adapter to give the President a balanced input. But nobody talks about audio interfaces usually unless they talk about as a way into the computer to record. As for my situation? Why don't they include this example? And why should they assume that novice will automatically KNOW that the usb port, an audio interface will work as an output as if they never EVER mention this example or Setup? I guess it is to operate in both directions! But really, I'm crazy to wonder when no one never speaks or shows this configuration? He suggested I buy something similar to a UR22MkII of Steinberg, who has a USB port. Even the Steinberg site speaks only records and so using the USB key as input for use with the recording software. There is no mention of its use out the mac in balanced speaker entries, even in the manual. In fact, it is question is always true for every audio interface manual that I watched, even by other manufacturers! Why they all assume a novice like me (whose money is just as good as money from the experienced user) KNOW that? It's frustrating!

I know that this is not strictly a matter of logic, but I guess, in my view correctly, that a logic user community could be more appropriate to address my question for others communities. If I'm wrong, please help to re-send-the matter. Thank you.

Sound the interface itself that determines it can send and receive Audio or Midi... not the USB or FW port which both are devices of e/s...

All USB and Midi peripheral FW are inputs and outputs

All the USB and Audio FW are inputs and outputs...

All devices USB or external hardware with a USB port... can handle Midi and Audio... Some do... Most manipulate just Midi... or just Audio

The Steinberg UR22MkII manages Audio and Midi...

However, I do not recommend USB 2.0 audio devices... There are simply too many cases, problems and questions after the major updates for OS X with such devices especially when they are class compliant (IE without driver), even if the UR22MkII Steinberg is supposed to be compatible 10.11...

View the other may vary... because it's just a personal opinion based on my past experiences both in my studio... and based on the many issues presented here and elsewhere.

I'm sticking with Motu equipment for all my Audio devices... and I use iConnect devices for my Midi needs...

-

analog input and output synchronization

Hello everyone, I seem to have a problem of synchronization of the analog input and output on my M-series USB-6211. My request is quite simple. I want to the production and to acquire a sinusoid at the same time. Theoretically, I should have the same 4000 data points through the input and output channels. The reality, however, captured on an oscilloscope, shows that the analog output is off more than 4000 data points. The entry (acquisition) shows 4000 samples. Please see below an excerpt from the creation of task, timing and execution. I'm afraid that the analog input and output are not attached correctly. Do you see something suspicious? Thank you very much! The task was created: DAQmxCreateTask("",&inTaskHandle); DAQmxCreateTask("",&outTaskHandle); Analog output channel Configuration, with 20Ksamples/s: DAQmxCreateAOVoltageChan (outTaskHandle, physChanOut, ' ',-10, 10, DAQmx_Val_Volts, NULL); DAQmxCfgSampClkTiming (outTaskHandle, "OnboardClock", 20000, DAQmx_Val_Rising, DAQmx_Val_ContSamps, 4000); Configuration of the analog input strings: DAQmxCreateAIVoltageChan (inTaskHandle, physChanIn, "", DAQmx_Val_RSE,-10, 10, DAQmx_Val_Volts, ""); DAQmxCfgSampClkTiming (inTaskHandle, "OnboardClock", 20000, DAQmx_Val_Rising, DAQmx_Val_ContSamps, 4000); Set up the trigger: sprintf ("/%s/ai/StartTrigger", local_port, deviceName); DAQmxCfgDigEdgeStartTrig (outTaskHandle, local_port, DAQmx_Val_Rising); Output: DAQmxWriteAnalogF64 (outTaskHandle, (numberOfSamples * oversample), 1, 40, DAQmx_Val_GroupByChannel, input, & sampsPerChanWritten, NULL); Acquire: DAQmxReadAnalogF64 (inTaskHandle, 4000, 40, DAQmx_Val_GroupByChannel, readArray, 8000, & sampsPerChanRead, NULL); The tasks stop: DAQmxStopTask (outTaskHandle); DAQmxStopTask (inTaskHandle);

Hello

Change the finished continuous sampling method seems to solve the problem:

DAQmxCfgSampClkTiming (inTaskHandle, "OnboardClock", 20000, DAQmx_Val_Rising, DAQmx_Val_FiniteSamps, 4000);

Also, I wanted to say earlier to write 4000 samples:

Output:

DAQmxWriteAnalogF64 (outTaskHandle, 4000,1, 40, DAQmx_Val_GroupByChannel, input, & sampsPerChanWritten, NULL);

Thank you

-

SOUL corrupts input and output of AVI in MPG2 files

- SOUL 7.0.1.58 (64-bit)

- All CC, Photoshop, first, Speedgrade, AE

- All applications installed and updated yesterday

- Operating system is Win 7 64 bit Sp1

- SOUL through CC membership

- Image is not compressed, AE CC AVI, convert it to mpeg2 .mpg

- No error, just corrupt message frames created in the input and output files. The input files are checked OK before coding.

- Used preset is Source of Match with 100 quality and made maximum use of verified quality.

- He previously worked OK in SOUL CS 5.5 (CS 5.5 is now also corrupted and displays the same behavior)

- No third party I/O hardware

Would be very happy to help.

RESOLVED-

OK, so just in case someone else has the issue, the workaround is export of EI directly to the SOUL, do not use the AE render engine.

-

Synchronization of the inputs and outputs with different sampling frequencies

I'm relatively new to LabView. I have a NOR-myDAQ, and I am trying to accomplish the following:

Square wave output 10 kHz, duty cycle 50%.

Input sampling frequency of 200 kHz, synchronized with the output that I get 20 analog input samples by square wave, and I know what samples align with the high and low output of my square wave.

So far, I used a counter to create the square wave of 10 kHz, display on a digital output line. I tried to pull the document according to (http://www.ni.com/white-paper/4322/en), but I'm not sure how sample at a different rate than my clock pulse. It seems that this example is intended rather to taste one entry by analog clock pulse. There may be a way to create a faster clock (200 kHz) in the software and use that to synchronize the analog input collection as well as a slower 10 kHz output generation square wave?

I eventually have to use the analog inputs to obtain data and an analog output to write the data channel, so I need the impetus of the square wave at the exit on a digital PIN.

How could anyone do this in LabView?

Hi Eric,.

All subsystems (, AO, CTR) derive from the STC3 clocks so they don't drift, but in order to align your sample clock HAVE with pulse train that you generate on the counter, you'll want to trigger a task out of the other. I would like to start by a few examples taken from the example Finder > Input and Output material > DAQmx. You can trigger GOT off the train of impulses, start by Gen digital Pulse Train-keep -you probably already use a VI like this to generate 10 k pulse train. AI, start with an example like Acq Cont & chart voltage-Ext Clk - Dig Start.vi-you'll want to use the internal clock so just remove the control of the "Source of the clock" and it uses the internal clock. From there, simply set the "Source of the command" either be the PFI line generates the meter, or ' /

/Ctr0InternalOutput '-assuming that you are using the counter 0. You'll want to make sure that the start of the task HAVE faced the task of counter I is ready to trigger off the first impulse. They should be aligned at this point. For debugging, you can use DAQmx export Signal to export the sample clock - you can then brought the train line and the PFI pulse to make sure that they are aligned.

Hope this helps,

Andrew S

-

A sound card internal Mac pro 3.1 what they do?

And if yes where can buy one?

I need more input and output it attach to my PA and recording, hardware as I do with a windows PC.

The mac comes with a microphone and a single output with terrible impact when it is connected to my sound stuff.

A few people responded to your post above. Might be better to explain what your needs are, rather than starting a new discussion here.

-

Individual access to the inputs and outputs on a single port (PXI-6509

Hello

I use PXI-6509 and this sentence taken from the Manual:

"You can use each of the DIO lines as the input to a digital static (DI) or digital output (DO) line"

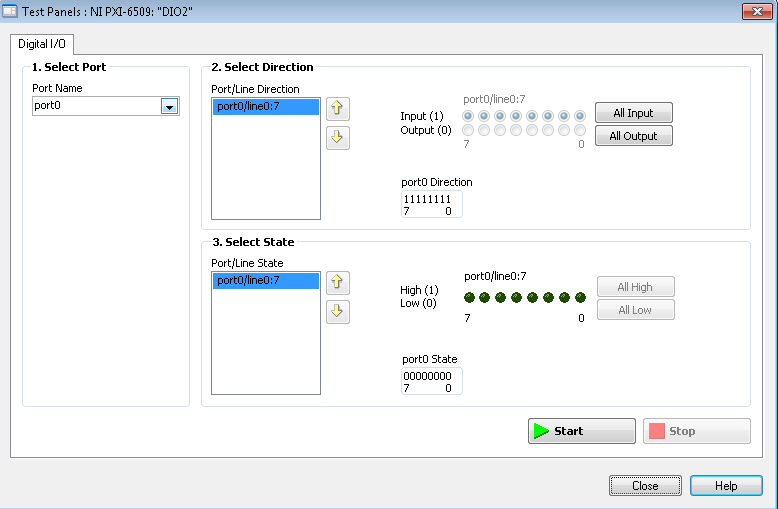

leads me to believe that each individual DIO can be defined as input or output (even within ports), but picture:

shows that these ports can be defined as inputs and outputs in the same port.

On another card 6284 with DIO, I can put them individually.

Can someone confirm that the 6509 is correctly?

Best regards

Adrian

-

Synchronized analog input and output on myRIO

Hello!

A brilliant new myRIO just landed on my desk and I'm looking forward to learn how to use it.

I have a question about the ability of the default FPGA personality.

Is really similar synchronous HW in and output possible? Can configure you the necessary trigger and clock routing from within VI RT? To say ~100kS/s?

I need to delve into a FPGA design to achieve this?

Thank you!

You will not be able to get your RT loop to run reliably at rates greater than 5 kHz, and we generally do not recommend trying to control I/O faster than 500 to 1000 Hz. This isn't a limitation of the default personality himself, it's just that some tasks are better suited for the OTR and some are better for the FPGA (it is important to understand when developing an application on the myRIO). Synchronization and the output of ~100KS/s signals are something that you have to do on the FPGA.

http://www.NI.com/Tutorial/14532/en/

There are some good tutorials in the link above. They use the cRIO instead of the myRIO but the functionality is basically the same. The biggest difference is that you won't have to add modules to your project, because all the inputs and outputs of the myRIO are fixed and must fill out automatically when you add a FPGA target to your project.

-

Make sure that wire you all the inputs and outputs of your node library function call?

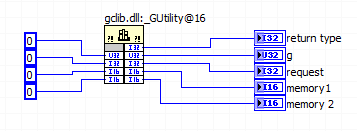

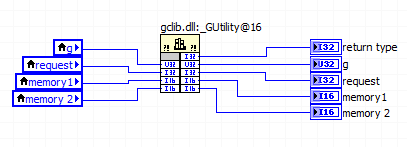

This document says "make sure that wire you all the inputs and outputs of your node library function call.

But all the terminals on the right side of the call library node considered "outputs" referred to in the foregoing statement?

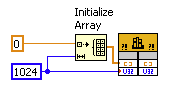

This same document continues to show the right way to allocate memory with this illustration and in the illustration, the right "outputs" are left without junctions.

Am I right in assuming that the only terminals that count as outputs, those who use the code of the DLL (modify) as output? If it is true, then all other terminals output associated with the values entered alone so don't really account as outputs, correct?

In the parameter call-library configuration screen there is a "Constant" check box and the help that he wrote "indicates whether the parameter is a constant." What is this box? for me in the setup of the DLL call

Finally, assuming that a call from the DLL that is supposed to write in these five outputs, is it legitimate to use constants like this to book a space of memory for the output values?

How about if local variables associated with the output terminals are used instead?

Despite the linked document, it is necessary to connect the corresponding entry for simple scalar output parameters (for example a digital). LabVIEW automatically allocate memory for them. If you do not want the entries for all the output wire anyway, there should not be no difference between a constant and a local variable; I would use a constant to avoid useless local variables.

For settings that are only entries, there is not need to connect the outlet side. It's a bit simplistic since all parameters are entered only and get one result (other than the return value), you pass a memory address and modify the content to this address, but LabVIEW manages this dereferencing pointer for you. If you want to really get into the details, learn more about pointers in C.

The "Constant" check box acts as the qualifier "const" on a c function parameter. It tells the compiler that the function you are calling will not change this setting. If you call a function prototype includes a const parameter, then you must mark this as a constant parameter when you configure the call library function node. Otherwise, I wouldn't worry on this subject.

-

Digital input and output problem

Hello:

I do a test for digital i/o:

for a table of the digital signal to an output of data acquisition in the digital input to detect the output signal.

(bascially, it's like a loop that goes outside the material)It's pretty simple, as shown in the attached fichier_1.

It works well.

The manual light switch controls, which means that inputs and outputs are ok.Then I went on the low level DAQ for better speed, as in attached fichier_2.

But it does not work. Especially when I pressed stop to abort the loop, an error has occurred:To speed up, I went to the low-level data acquisition as the fichier_2 attached.

But it does not work. Espeically when I press the "stop"button to exit the loop, the error occurs.Possible reasons:

Requested value is not supported for this property value.

The value of the property may be invalid because it is in conflict with another property.Property: SampTimingType

Asked the value large clock

large clock

You can select: on requestI don't understand why the sampling time has a conflict here.

(It is probably just something very simple in data acquisition, but I checked a few examples and did not find a clue).

Hope someone can give me a suggestion.Ultimately, my goal is to make the attached file_3.

In this one, I generate a digital output, and then lead to the entrance.

Then I can take it as a signal to trigger my other task.Note:

I use a similar conti signal to control one of my camera.

I need to sync it with my another task.

So I think to generate a digital output (which share the same clock as the signal similar to the data acquisition device), then put it in one of the digital input.

By detecting this digital input, I can trigger my task and synchronize with this signal similar.

My camera's USB-6211.

I am aware of the latency of USB, but once the value is a constant value, then the synchronization is always good for me.

Actually, I was using an analogue at the entrance of the to do it before, it may work, but the synchronization error is too big for me.

I need to do some calculations/judgment for this analog value, which makes the time difference varies.

So I'm trying digital entry now and I hope that the digital input can trigger my task with a stable latency.Thank you very much

Have you looked at the specs? It clearly states that the digital I/o is a programmed software. You have not any hardware clock at all. The best rate that you could possibly achieve is around 1 kHz and which would have a considerable jitter the nature of non-determimistic of windows.

-

synchronize the inputs and outputs on the USB-4431

Hello

I have an application that needs to send a signal on the USB-4431 and then capture it with an entry on the same device.

Aware that I use two tasks to do this, one for input and one for output. I discovered that a trigger (on the RTSI bus) can cycles of sending/capture sychronisé departure operations so that it can be a constant offset between the captured signal and the output signal.

Unfortunately, the code I found is for Matlab. I can't find an equivalent for it in the C API of NIdaq. The method is described here; http://www.mathworks.in/help/daq/synchronize-analog-input-and-output-using-rtsi.html.

What I can't understand is how to implement this on the analog input:

ai.ExternalTriggerDriveLine = 'RTSI0';

Can someone shed light on how to do it?

The rest of the things described here, seems to be feasible with a normal trigger:

ao.TriggerType = 'HwDigital'; ao.HwDigitalTriggerSource = 'RTSI0'; ao.TriggerCondition = 'PositiveEdge';

Thank you

Nirvtek

You can synchronize the HAVE and AO by sharing the start of relaxation between your two tasks.

Choose one of your tasks as the "master" and the other to be the "slave" (any).

Set up a trigger to start of digital dashboard on the task of the slave, and set the source of the trigger to be the trigger of the master's departure.

Assume the following:

The name of your device is 'Dev1 '.

I is the main task

AO is the task of the slave

Here's what you would do to sync the two:

(1) create the tasks I and AO in order that you want to

(2) set up "timing" on the tasks of HAVE it and AO (you choose the sampling rates must be the same or power-of-2 many of the other (for example 100 K, 50 K, 25 K, 12.5 K, etc...))

(3) configure your slave (the task of AO) task to have a numerical advantage start trigger and make the source is the trigger for the start of the task of the master (the task to HAVE it). In our case, "Dev1, AI, StartTrigger.

(4) write data (a sine wave, presumably) to your task AO

(5) start the task from the slave (the task of AO). The task of the AO is now in the 'Started' State, but given that you've set up a digital trigger early, it won't actually generate data until he sees a numerical advantage of 'Dev1, AI, StartTrigger.

(6) to start the main task (task to HAVE it). The task of the AI does not have a trigger digital early, so the software will immediately generate a start trigger, which also causes a numerical advantage on "StartTrigger/AI/Dev1", which causes the task AO start at the same time.

7) read your job to HAVE.

You will notice a few 0 at the beginning of your data to HAVE. It's a result of something called "Filter Delay" and it is an inherent characteristic of all DSA devices - see the manual to use DSA and this article for more information on what is and how to cope.

I hope this helps.

EDIT: I just noticed that you pointed out an existing C example. It's exactly what you want. I don't know why you have a resource error booked - I tried it myself and (after changing the AO will of +/-10V to +/-3 .5V), it works beautifully. Try to reset your device to the MAX (or DAQmxResetDevice() of your program)

-

Why not USB-6509 allow setting input and output on individual ports?

I have one of the new USB-6509 (96 digital i/o channels) commissions. It has 12 ports for every 8 bits. The manual says that you can define the inputs and outputs on a per port basis. When I read an 8-bit port that I find it clears different ports that I put in place to be digital output. They seem to be grouped into 3 groups of 4. 0-3, 4-7 and 8-11. When I read from any single port within this group it erases the other ports in this group that I try to use as exits. The result is that I can put only input and outputs in groups of 4 ports. Unfortunately, we have built custom hardware based on the assumption that we could control the inputs and outputs on a basis of port by port. I see this problem as well with my LabVIEW 7.1 program (see table), as well as the measurement and Automation Explorer. Is anyone out there seen this problem with the USB-6509? Anyone using it with success? I also notice strange problems with power on, but I have yet to understand the model. IM wondering if I have a defective material or maybe this new material still has problems. I tried NIDAQ 8.7.1 and 8.8. Same problem with both. Thank you.

Thanks for the replies. It was determined that the USB-6509 we have has a hardware malfunction that is causing the ports to interfere between them. We are return to OR for repair or replacement. For now, we used a PCI-DIO-96, which works correctly. Thank you, Andy

-

Configure 9401 to buffering of input and output

I have a compact DAQ (9174) and the module 9401. I found the example to configure the inputs and outputs separately. But when I try to apply that to my application, I get the error:

Device cannot be configured for input or output because the lines and/or the terminals on this device are in use by another job or road. This operation requires temporarily reserving all lines and terminals for communication, which interferes with the other task or the road.

If possible, use the control task DAQmx to book tasks that use this device before committing to tasks that use this device. Otherwise, uncommit or cancel the other task or disconnect the other lane before attempting to configure the input or output device.

Feature: 9401-0

Digital port: 0

Lines: 0, 1, 2, 3Task name: _unnamedTask

I tried using the example that works and adding just the bits and I think it has something to do with the fact that I use stored sample, but not sure clock output buffer. I found the sample output correlated and fundamentally changed than to generate a waveform, that I need. That part worked fine. But when I then tried to use the entry, which has not worked very well. The related example, I tried with line0:3 as output and input on the 9401 line7 and using the meter chassis as source is attached.

Is there something with exits/entries in the buffer that will not allow using both at the same time? or what am I missing?

Found my problem. The RESERVE has to happen just before the start of the task. If try to change the sample clock or anything after reserve, leads to problems.

-

Using the same PIN for input and output

Hello

I would use a single PIN for input and output.

I'm experimenting with writing a driver for the DHT11 that using a single interface

I have the following code to open the PIN, but it fails

GPIOPin dhtPin = (GPIOPin) DeviceManager.open (new GPIOPinConfig (0, 17, GPIOPinConfig.DIR_BOTH_INIT_INPUT, GPIOPinConfig.DEFAULT, GPIOPinConfig.TRIGGER_NONE, false));

VM - iso [DAAPI] =-1: not supported direction was placed for 17 GPIO pin number. Open failed

jdk.dio.InvalidDeviceConfigException

-com/oracle/deviceaccess/gpio/impl/GPIOPinImpl.openPinByConfig0 (), bci = 0

com/oracle/deviceaccess/gpio/impl/GPIOPinImpl. < init > (), bci = 87

-com/oracle/deviceaccess/gpio/impl/GPIOPinFactory.create (), bci = 6

-com/oracle/deviceaccess/gpio/impl/GPIOPinFactory.create (), bci = 3

-jdk/dio/DeviceManager.openWithConfig (), bci = 49

-jdk/dio/DeviceManager.open (), bci = 6

-jdk/dio/DeviceManager.open (), bci = 2

-dht11 / DHT11. < init > (DHT11.java:42)

-dht11 / DHT11. < init > (DHT11.java:37)

-dht11/DHT11Midlet.startApp(DHT11Midlet.java:25)

-javax/microedition/midlet/MIDletTunnelImpl.callStartApp (), bci = 1

-com/sun/midp/midlet/MIDletPeer.startApp (), bci = 5

-com/sun/midp/midlet/MIDletStateHandler.startSuite (), bci = 264

-com/sun/midp/main/AbstractMIDletSuiteLoader.startSuite (), bci = 38

-com/sun/midp/main/CldcMIDletSuiteLoader.startSuite (), bci = 5

-com/sun/midp/main/AbstractMIDletSuiteLoader.runMIDletSuite (), bci = 132

-com/sun/midp/main/AppIsolateMIDletSuiteLoader.main (), bci = 26

I have the following permissions value

jdk.dio.gpio.GPIOPinPermission "*: *" 'open, setdirection '.

jdk.dio.DeviceMgmtPermission "*: *" 'open '.

I tried a few other pins too, I don't know if some ankles are entered or only output pins.

Any help would be appreciated. I could not find documents explaining how to configure more than one action for a permission ( 'open, setdirection'), so I tried just until he stopped to complain about the values...

What I need is to open a PIN, set it OUT, write a few high and low values... set it to the direction of the ENTRANCE, and reading back high and low values... But right now my GPIOPinConfig seems to be problematic

(... Configuration of the meaning to DIR_INPUT_ONLY or DIR_OUTPUT_ONLY, works until I try to change the direction of the port - what is expected...)

Hi Charl-

As far as I KNOW, he is there no current plan to apply 1 thread in Java ME Embedded.

I also looked at Pi4Jand they do not also support 1-wire, however, there is an enhancement request to add support for the bit hit Linux driver will have to perform 1-wire work.

The raspberry pi support it, it's just Java ME holds back me.

BTW - the article has been referenced in the enhancement request notes that he is not taken in native support for 1 wire on the Raspberry Pi - it requires a Linux kernel driver module.

Tom

-

The input and output encodings are not same

Hello

I'm trying to quantify a value by using the function 'Encrypt' and the same thing I'm trying to decipher in the next page before you update the database.

I am using the same key algorithm and the encoding process to decrypt.

But he is back this error "input and output encodings are not same.

Can someone help me on this?

form.txtPassword is plain text. You can't decrypt() plain text.

--

Adam

Maybe you are looking for

-

as above

-

Toshiba 40TL963 - 3D not possible

Just tried my TV to play movie in 3d and can not get any setting appears when I press the 'quick menu' option on the remote control. Been on the phone to support Toshshiba and reset TV but still no luck.The 3d player works fine that we tested on anot

-

Equium A100-147 - sound devices do not work correctly

I've owned an Equium A100-147 for only less than a year. The problem I have is that if I put pressure on the area around the left speaker (where is the VGA port) there is a substantial "cracking" / rattling noise. The right does not exhibit this prob

-

I was wondering how you get points on this site and what do I know about helping people on this sites and things to do and not to do. And I have to be apple pro for

-

Download speed very slow on the wired Ethernet connection

I am currently getting 200 Mbps down and 0.3 MB/s on my desk wired Ethernet connection. I should get 200 down and 20 upwards. On my wireless adapter on the same router, I have ~ 18 Mbit/s to the top and about 15 Mbit/s down, which seems that the wire