System vlan on Nexus 1000v

Hi all

I understand that this vlan system allows the traffic flow for the vlan was VSM is not accessible, and vlan system should NOT be normal machine virtual traffic vlan. In my deployment of a normal vSphere environment with N1kv, I'll put these VLANS as system vlan: ESXi Mgmt N1kv mgmt, control & package, VMotion, storage over IP.

I put the VLANs as system vlan on the uplink port profiles and indivdual port profiles for each VIRTUAL local area network. Correct me if that's wrong.

What should be system vlan, or what those who shouldn't be system vlan? VMotion vlan? What are the disadvantages to specify all the VLANS as system vlan? Is it not better because even if VSM fell for a reason, MEC will still send traffic for all virtual machines?

Thank you

Ming

Ming,

Your understanding of the system VLAN is not totally accurate. All them VLAN will be forwarding the case where your VSM is not accessible. Each MEC module will continue to pass system and non-vlan traffic if the VSM is offline. EACH MEC will keep its current programming, but will not accept any changes until the VSM is back online. System VLAN behaves differently that they will always be in a State of transfer. VLAN systems will transmit the traffic even before that a MEC is programmed by VSM. That is why some system profiles demand them - IE. Control/package etc. These VLANs must be transferred in ORDER for the MEC to talk to the VSM.

As for your list of "what should be system VLAN"-remove VMotion. There is no reason that your VMotion network should be defined as a system of VIRTUAL LAN. All the others are correct.

Also remember that you can ONLY define a VLAN on the port profile an uplink. So if you use an uplink for 'system' type traffic and the other for traffic of type "Data VM", you would have just any single VLAN 'authorized' on an uplink - not both. Allowing them the time will cause problems. The only case, you have to keep in mind is that for a "system vlan" to apply, it must be defined on the Port of vEthernet profile and a profile of Uplink Port.

E.g.

Let's say my Service Console uses VLAN 10 and my VMs also use the VLAN 10 for their data traffic. (Bad design, but just to illustrate a point).

VLAN in "two places" seen set the system would you allow to treat ONLY the traffic of your "Service Console" as a traffic system and always apply security programming for your traffic "VLAN Data. After a reboot, you Console of Service traffic would be routed immediately, but your VM data would not be until the MEC had pulled the programming of the VSM.

profile port vethernet dvs_ServiceConsole type

VMware-port group

switchport mode access

switchport access vlan 10

no downtime

System vlan 10<== defined="" as="" system="">

enabled state

profile port vethernet dvs_VM_Data_VLAN10 type

VMware-port group

switchport mode access

switchport access vlan 10<== no="" system="">

no downtime

enabled state

profile system uplink ethernet port type

VMware-port group

switchport mode trunk

switchport trunk allowed vlan 10, 3001-3002

Active Channel-Group auto mode

no downtime

System vlan 10, 3001-3002<== system="" vlan="" 10="">

enabled state

Hope this clears your understanding.

Kind regards

Robert

Tags: Cisco DataCenter

Similar Questions

-

Remove the ' system VLAN "Nexus 1000V port-profile

We have a Dell M1000e blade chassis with a number of Server Blade M605 ESXi 5.0 using the Nexus 1000V for networking. We use 10 G Ethernet fabric B and C, for a total of 4 10 cards per server. We do not use the NIC 1 G on A fabric. We currently use a NIC of B and C fabrics for the traffic of the virtual machine and the other card NETWORK in each fabric for traffic management/vMotion/iSCSI VM. We currently use iSCSI EqualLogic PS6010 arrays and have two configuration of port-groups with iSCSI connections (a physical NIC vmnic3 and a vmnic5 of NIC physical).

We have added a unified EMC VNX 5300 table at our facility and we have configured three VLANs extra on our network - two for iSCSI and other for NFS configuration. We've added added vEthernet port-profiles for the VLAN of new three, but when we added the new vmk # ports on some of the ESXi servers, they couldn't ping anything. We got a deal of TAC with Cisco and it was determined that only a single port group with iSCSI connections can be bound to a physical uplink both.

We decided that we would temporarily add the VLAN again to the list of VLANS allowed on the ports of trunk of physical switch currently only used for the traffic of the VM. We need to delete the new VLAN port ethernet-profile current but facing a problem.

The Nexus 1000V current profile port that must be changed is:

The DenverMgmtSanUplinks type ethernet port profile

VMware-port group

switchport mode trunk

switchport trunk allowed vlan 2308-2306, 2311-2315

passive auto channel-group mode

no downtime

System vlan 2308-2306, 2311-2315

MGMT RISING SAN description

enabled state

We must remove the list ' system vlan "vlan 2313-2315 in order to remove them from the list" trunk switchport allowed vlan.

However, when we try to do, we get an error about the port-profile is currently in use:

vsm21a # conf t

Enter configuration commands, one per line. End with CNTL/Z.

vsm21a (config) #-port ethernet type DenverMgmtSanUplinks profile

vsm21a(config-port-Prof) # system vlan 2308-2306, 2311-2312

ERROR: Cannot delete system VLAN, port-profile in use by Po2 interface

We have 6 ESXi servers connected to this Nexus 1000V. Originally they were MEC 3-8 but apparently when we made an update of the firmware, they had re - VEM 9-14 and the old 6 VEM and associates of the Channel ports, are orphans.

By example, if we look at the port-channel 2 more in detail, we see orphans 3 VEM-related sound and it has no ports associated with it:

Sho vsm21a(config-port-Prof) # run int port-channel 2

! Command: show running-config interface port-canal2

! Time: Thu Apr 26 18:59:06 2013

version 4.2 (1) SV2 (1.1)

interface port-canal2

inherit port-profile DenverMgmtSanUplinks

MEC 3

vsm21a(config-port-Prof) # sho int port-channel 2

port-canal2 is stopped (no operational member)

Material: Port Channel, address: 0000.0000.0000 (bia 0000.0000.0000)

MTU 1500 bytes, BW 100000 Kbit, DLY 10 usec,

reliability 255/255, txload 1/255, rxload 1/255

Encapsulation ARPA

Port mode is trunk

Auto-duplex, 10 Gb/s

Lighthouse is off

Input stream control is turned off, output flow control is disabled

Switchport monitor is off

Members in this channel: Eth3/4, Eth3/6

Final cleaning of "show interface" counters never

102 interface resets

We can probably remove the port-channel 2, but assumed that the error message on the port-profile in use is cascading on the other channel ports. We can delete the other port-channel 4,6,8,10 orphans and 12 as they are associated with the orphan VEM, but we expect wil then also get errors on the channels of port 13,15,17,19,21 and 23 who are associated with the MEC assets.

We are looking to see if there is an easy way to fix this on the MSM, or if we need to break one of the rising physical on each server, connect to a vSS or vDS and migrate all off us so the Nexus 1000V vmkernel ports can clean number VLAN.

You will not be able to remove the VLAN from the system until nothing by using this port-profile. We are very protective of any vlan that is designated on the system command line vlan.

You must clean the canals of old port and the old MEC. You can safely do 'no port-channel int' and "no vem" on devices which are no longer used.

What you can do is to create a new port to link rising profile with the settings you want. Then invert the interfaces in the new port-profile. It is generally easier to create a new one then to attempt to clean and the old port-profile with control panel vlan.

I would like to make the following steps.

Create a new port-profile with the settings you want to

Put the host in if possible maintenance mode

Pick a network of former N1Kv eth port-profile card

Add the network adapter in the new N1Kv eth port-profile

Pull on the second NIC on the old port-profile of eth

Add the second network card in the new port-profile

You will get some duplicated packages, error messages, but it should work.

The other option is to remove the N1Kv host and add it by using the new profile port eth.

Another option is to leave it. Unless it's really bother you no VMs will be able to use these ports-profile unless you create a port veth profile on this VLAN.

Louis

-

Cisco Nexus 1000V Virtual Switch Module investment series in the Cisco Unified Computing System

Hi all

I read an article by Cisco entitled "Best practices in Deploying Cisco Nexus 1000V Switches Cisco UCS B and C Series series Cisco UCS Manager servers" http://www.cisco.com/en/US/prod/collateral/switches/ps9441/ps9902/white_paper_c11-558242.htmlA lot of excellent information, but the section that intrigues me, has to do with the implementation of module of the VSM in the UCS. The article lists 4 options in order of preference, but does not provide details or the reasons underlying the recommendations. The options are the following:

============================================================================================================================================================

Option 1: VSM external to the Cisco Unified Computing System on the Cisco Nexus 1010In this scenario, the virtual environment management operations is accomplished in a method identical to existing environments not virtualized. With multiple instances on the Nexus 1010 VSM, multiple vCenter data centers can be supported.

============================================================================================================================================================Option 2: VSM outside the Cisco Unified Computing System on the Cisco Nexus 1000V series MEC

This model allows to centralize the management of virtual infrastructure, and proved to be very stable...

============================================================================================================================================================Option 3: VSM Outside the Cisco Unified Computing System on the VMware vSwitch

This model allows to isolate managed devices, and it migrates to the model of the device of the unit of Services virtual Cisco Nexus 1010. A possible concern here is the management and the operational model of the network between the MSM and VEM devices links.

============================================================================================================================================================Option 4: VSM Inside the Cisco Unified Computing System on the VMware vSwitch

This model was also stable in test deployments. A possible concern here is the management and the operational model of the network links between the MSM and VEM devices and switching infrastructure have doubles in your Cisco Unified Computing System.

============================================================================================================================================================As a beginner for both 100V Nexus and UCS, I hope someone can help me understand the configuration of these options and equally important to provide a more detailed explanation of each of the options and the resoning behind preferences (pro advantages and disadvantages).

Thank you

PradeepNo, they are different products. vASA will be a virtual version of our ASA device.

ASA is a complete recommended firewall.

-

1000V - Importance of the ' system vlan "in port-profile

Hi all

Can someone help me understand the importance of the command "system vlan" in a profile of port?

As I understand it, it was used to mark the criticisms of the system VLAN (package, data, etc.) to their config was pushed to vCenter so that they would continue to work the MSM should be down. Is this fair?

All of the examples I have watch seems to just mark every VLAN (data VM even VLAN) as "system vlan" in their profiles of prot ethernet uplink. Now that is best practice?

In addition, there is a reason to mark vEthernet with control panel ports vlan?

See you soon,.

P

As you already wear that system vlan is to ensure access to the vlan vsm to go down and then the esxi host is reset. If the system vlan was not enabled host esxi would not be able to talk to the vsm, but this is only the case if the management port using the Cisco VDS and not a VSS or VDS.

as I mainly use servers with only 2 ports 10 GB I use system VLAN for ESXi management / storage and vMotion and if the vCenter is a virtual machine, then also the vlan that uses. all other VLANS I don't not in the category system VLAN.

I use also a VSM Layer 3 so no need to worry the VLAN the packet and data. But I use that I have several farms esxi in a different subnets from the management and the 1000v in a subnet for network management.

I hope this helps.

-

VXLAN on UCS: IGMP with Catalyst 3750, 5548 Nexus, Nexus 1000V

Hello team,

My lab consists of Catalyst 3750 with SVI acting as the router, 5548 Nexus in the vpc Setup, UCS in end-host Mode and Nexus 1000V with segmentation feature enabled (VXLAN).

I have two different VLAN for VXLAN (140, 141) to demonstrate connectivity across the L3.

VMKernel on VLAN 140 guests join the multicast fine group.

Hosts with VMKernel on 141 VLAN do not join the multicast group. Then, VMs on these hosts cannot virtual computers ping hosts on the local network VIRTUAL 140, and they can't even ping each other.

I turned on debug ip igmp on the L3 Switch, and the result indicates a timeout when he is waiting for a report from 141 VLAN:

15 Oct 08:57:34.201: IGMP (0): send requests General v2 on Vlan140

15 Oct 08:57:34.201: IGMP (0): set the report interval to 3.6 seconds for 224.0.1.40 on Vlan140

15 Oct 08:57:36.886: IGMP (0): receipt v2 report on 172.16.66.2 to 239.1.1.1 Vlan140

15 Oct 08:57:36.886: IGMP (0): group record received for group 239.1.1.1, mode 2 from 172.16.66.2 to 0 sources

15 Oct 08:57:36.886: IGMP (0): update EXCLUDE group 239.1.1.1 timer

15 Oct 08:57:36.886: IGMP (0): add/update Vlan140 MRT for (*, 239.1.1.1) 0

15 Oct 08:57:38.270: IGMP (0): send report v2 for 224.0.1.40 on Vlan140

15 Oct 08:57:38.270: IGMP (0): receipt v2 report on Vlan140 of 172.16.66.1 for 224.0.1.40

15 Oct 08:57:38.270: IGMP (0): group record received for group 224.0.1.40, mode 2 from 172.16.66.1 to 0 sources

15 Oct 08:57:38.270: IGMP (0): update EXCLUDE timer group for 224.0.1.40

15 Oct 08:57:38.270: IGMP (0): add/update Vlan140 MRT for (*, 224.0.1.40) by 0

15 Oct 08:57:51.464: IGMP (0): send requests General v2 on Vlan141<----- it="" just="" hangs="" here="" until="" timeout="" and="" goes="" back="" to="">

15 Oct 08:58:35.107: IGMP (0): send requests General v2 on Vlan140

15 Oct 08:58:35.107: IGMP (0): set the report interval to 0.3 seconds for 224.0.1.40 on Vlan140

15 Oct 08:58:35.686: IGMP (0): receipt v2 report on 172.16.66.2 to 239.1.1.1 Vlan140

15 Oct 08:58:35.686: IGMP (0): group record received for group 239.1.1.1, mode 2 from 172.16.66.2 to 0 sources

15 Oct 08:58:35.686: IGMP (0): update EXCLUDE group 239.1.1.1 timer

15 Oct 08:58:35.686: IGMP (0): add/update Vlan140 MRT for (*, 239.1.1.1) 0

If I do a show ip igmp interface, I get the report that there is no joins for vlan 141:

Vlan140 is up, line protocol is up

The Internet address is 172.16.66.1/26

IGMP is enabled on the interface

Current version of IGMP host is 2

Current version of IGMP router is 2

The IGMP query interval is 60 seconds

Configured IGMP queries interval is 60 seconds

IGMP querier timeout is 120 seconds

Configured IGMP querier timeout is 120 seconds

Query response time is 10 seconds max IGMP

Number of queries last member is 2

Last member query response interval is 1000 ms

Access group incoming IGMP is not defined

IGMP activity: 2 joints, 0 leaves

Multicast routing is enabled on the interface

Threshold multicast TTL is 0

Multicast designated router (DR) is 172.16.66.1 (this system)

IGMP querying router is 172.16.66.1 (this system)

Multicast groups joined by this system (number of users):

224.0.1.40 (1)

Vlan141 is up, line protocol is up

The Internet address is 172.16.66.65/26

IGMP is enabled on the interface

Current version of IGMP host is 2

Current version of IGMP router is 2

The IGMP query interval is 60 seconds

Configured IGMP queries interval is 60 seconds

IGMP querier timeout is 120 seconds

Configured IGMP querier timeout is 120 seconds

Query response time is 10 seconds max IGMP

Number of queries last member is 2

Last member query response interval is 1000 ms

Access group incoming IGMP is not defined

IGMP activity: 0 joins, 0 leaves

Multicast routing is enabled on the interface

Threshold multicast TTL is 0

Multicast designated router (DR) is 172.16.66.65 (this system)

IGMP querying router is 172.16.66.65 (this system)

No group multicast joined by this system

Is there a way to check why the hosts on 141 VLAN are joined not successfully? port-profile on the 1000V configuration of vlan 140 and vlan 141 rising and vmkernel are identical, except for the different numbers vlan.

Thank you

Trevor

Hi Trevor,

Once the quick thing to check would be the config igmp for both VLAN.

where did you configure the interrogator for the vlan 140 and 141?

are there changes in transport VXLAN crossing routers? If so you would need routing multicast enabled.

Thank you!

. / Afonso

-

Hello

Thanks for reading.

I have a virtual (VM1) connected to a Nexus 1000V distributed switch. The willing 1000V of a connection to our DMZ (physically, an interface on our Cisco ASA 5520) which has 3 other virtual machines that are used successfully to the top in the demilitarized zone. The problem is that a SHOW on the SAA ARP shows the other VM addresses MAC but not VM1.

The properties for all the VMS (including VM1) participating in the demilitarized zone are the same:

- Tag network

- VLAN ID

- Port group

- State - link up

- DirectPath i/o - inactive "path Direct I/O has been explicitly disabled for this port.

The only important difference between VM1 and the others is that they are multihomed agents and have one foot in our private network. I think that the absence of a private IP VM1 is not the source of the problem. All virtual machines recognized as directly connected to the ASA (except VM1).

Have you ever seen this kind of thing before?

Thanks again for reading!

Bob

The systems team:

- Rebuilt the virtual machine

- Moved to another cluster

- Configured for DMZ interface

Something that they got the visible VM to the FW.

-

Nexus 1000v - this config makes sense?

Hello

I started to deploy the Nexus 1000v at a 6 host cluster, all running vSphere 4.1 (vCenter and ESXi). The basic configuration, license etc. is already completed and so far no problem.

My doubts are with respect to the actual creation of the uplink system, port-profiles, etc. Basically, I want to make sure I don't make any mistakes in the way that I want to put in place.

My current setup for each host is like this with standard vSwitches:

vSwitch0: 2 natachasery/active, with management and vMotion vmkernel ports.

vSwitch1: natachasery 2/active, dedicated to a storage vmkernel port

vSwitch2: 2 natachasery/active for the traffic of the virtual machine.

I thought that translate to the Nexus 1000v as this:

System-uplink1 with 2 natachasery where I'm putting the ports of vmk management and vMotion

System-uplink2 with 2 natachasery for storage vmk

System-uplink3 with 2 natachasery for the traffic of the virtual machine.

These three system uplinks are global, right? Or I put up three rising system unique for each host? I thought that by making global rising 3 would make things a lot easier because if I change something in an uplink, it will be pushed to 6 guests.

Also, I read somewhere that if I use 2 natachasery by uplink system, then I need to set up a channel of port on our physical switches?

At the moment the VSM has 3 different VLAN for the management, control and packet, I want to migrate the groups of 3 ports on the standard switch to the n1kv itself.

Also, when I migrated to N1Kv SVS management port, host complained that there no redundancy management, even if the uplink1 where mgmt-port profile is attached, has 2 natachasery added to it.

While the guys do you think? In addition, any other best practices are much appreciated.

Thanks in advance,

Yes, uplink port-profiles are global.

What you propose works with a warning. You cannot superimpose a vlan between these uplinks. So if your uplink management will use vlan 100 and your uplink of VM data must also use vlan 100 which will cause problems.

Louis

-

I'm working on the Cisco Nexus 1000v deployment to our ESX cluster. I have read the Cisco "Start Guide" and the "installation guide" but the guides are good to generalize your environment and obviously does not meet my questions based on our architecture.

This comment in the "Getting Started Guide" Cisco makes it sound like you can't uplink of several switches on an individual ESX host:

«The server administrator must assign not more than one uplink on the same VLAN without port channels.» Affect more than one uplink on the same host is not supported by the following:

A profile without the port channels.

Port profiles that share one or more VLANS.

After this comment, is possible to PortChannel 2 natachasery on one side of the link (ESX host side) and each have to go to a separate upstream switch? I am creating a redundancy to the ESX host using 2 switches but this comment sounds like I need the side portchannel ESX to associate the VLAN for both interfaces. How do you manage each link and then on the side of the switch upstream? I don't think that you can add to a portchannel on this side of the uplink as the port channel protocol will not properly negotiate and show one side down on the side ESX/VEM.

I'm more complicate it? Thank you.

Do not portchannel, but it is possible the channel port to different switches using the pinning VPC - MAC mode. On upstream switches, make sure that the ports are configured the same. Same speed, switch config, VLAN, etc (but no control channel)

On the VSM to create a unique profile eth type port with the following channel-group command

port-profile type ethernet Uplink-VPC

VMware-port group

switchport mode trunk

Automatic channel-group on mac - pinning

no downtime

System vlan 2.10

enabled state

What that will do is create a channel port on the N1KV only. Your ESX host will get redundancy but your balancing algorithm will be simple Robin out of the VM. If you want to pin a specific traffic for a particular connection, you can add the "pin id" command to your port-type veth profiles.

To see the PIN, you can run

module vem x run vemcmd see the port

n1000v-module # 5 MV vem run vemcmd see the port

LTL VSM link PC - LTL SGID Vem State Port Admin Port

18 Eth5/2 UP UP FWD 1 305 vmnic1

19 Eth5/3 UP UP FWD 305 2 vmnic2

49 Veth1 UP UP 0 1 vm1 - 3.eth0 FWD

50 Veth3 UP UP 0 2 linux - 4.eth0 FWD

Po5 305 to TOP up FWD 0

The key is the column SGID. vmnic1 is SGID 1 and vmnic2 2 SGID. Vm1-3 VM is pinned to SGID1 and linux-4 is pinned to SGID2.

You can kill a connection and traffic should swap.

Louis

-

What does Nexus 1000v Version number Say

Can any body provide long Nexus 1000v version number, for example 5.2 (1) SV3 (1.15)

And what does SV mean in the version number.

Thank you

SV is the abbreviation of "Swiched VMware"

See below for a detailed explanation:

http://www.Cisco.com/c/en/us/about/Security-Center/iOS-NX-OS-reference-g...

The Cisco NX - OS dialing software

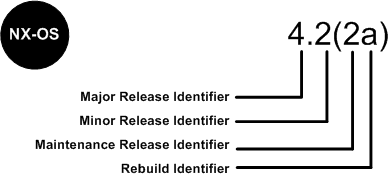

Software Cisco NX - OS is a data-center-class operating system that provides a high thanks to a modular design availability. The Cisco NX - OS software is software-based Cisco MDS 9000 SAN - OS and it supports the Cisco Nexus series switch Cisco MDS 9000 series multilayer. The Cisco NX - OS software contains a boot kick image and an image of the system, the two images contain an identifier of major version, minor version identifier and a maintenance release identifier, and they may also contain an identifier of reconstruction, which can also be referred to as a Patch to support. (See Figure 6).

Software NX - OS Cisco Nexus 7000 Series and MDS 9000 series switches use the numbering scheme that is illustrated in Figure 6.

Figure 6. Switches of the series Cisco IOS dial for Cisco Nexus 7000 and MDS 9000 NX - OS

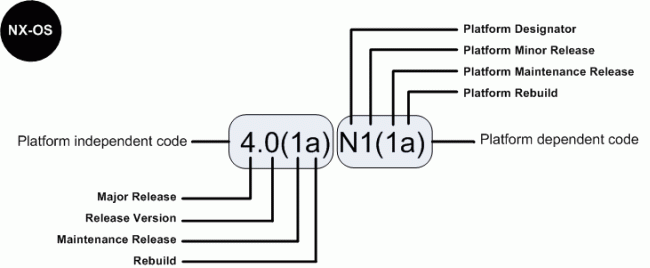

For the other members of the family, software Cisco NX - OS uses a combination of systems independent of the platform and is dependent on the platform as shown in Figure 6a.

Figure 6 a. software Cisco IOS NX - OS numbering for the link between 4000 and 5000 Series and Nexus 1000 switches virtual

The indicator of the platform is N for switches of the 5000 series Nexus, E for the switches of the series 4000 Nexus and S for the Nexus 1000 series switches. In addition, Nexus 1000 virtual switch uses a designation of two letters platform where the second letter indicates the hypervisor vendor that the virtual switch is compatible with, for example V for VMware. Features there are patches in the platform-independent code and features are present in the version of the platform-dependent Figure 6 a above, there is place of bugs in the version of the software Cisco NX - OS 4.0(1a) are present in the version 4.0(1a) N1(1a).

-

The Nexus 1000V loop prevention

Hello

I wonder if there is a mechanism that I can use to secure a network against the loop of L2 packed the side of vserver in Vmware with Nexus 1000V environment.

I know, Nexus 1000V can prevent against the loop on the external links, but there is no information, there are features that can prevent against the loop caused by the bridge set up on the side of the OS on VMware virtual server.

Thank you in advance for an answer.

Concerning

Lukas

Hi Lukas.

To avoid loops, the N1KV does not pass traffic between physical network cards and also, he silently down traffic between vNIC is the bridge by operating system.

http://www.Cisco.com/en/us/prod/collateral/switches/ps9441/ps9902/guide_c07-556626.html#wp9000156

We must not explicit configuration on N1KV.

Padma

-

Nexus 1000v, UCS, and Microsoft NETWORK load balancing

Hi all

I have a client that implements a new Exchange 2010 environment. They have an obligation to configure load balancing for Client Access servers. The environment consists of VMware vShpere running on top of Cisco UCS blades with the Nexus 1000v dvSwitch.

Everything I've read so far indicates that I must do the following:

1 configure MS in Multicast mode load balancing (by selecting the IGMP protocol option).

2. create a static ARP entry for the address of virtual cluster on the router for the subnet of the server.

3. (maybe) configure a static MAC table entry on the router for the subnet of the server.

3. (maybe) to disable the IGMP snooping on the VLAN appropriate in the Nexus 1000v.

My questions are:

1. any person running successfully a similar configuration?

2 are there missing steps in the list above, or I shouldn't do?

3. If I am disabling the snooping IGMP on the Nexus 1000v should I also disable it on the fabric of UCS interconnections and router?

Thanks a lot for your time,.

Aaron

Aaron,

The steps above you are correct, you need steps 1-4 to operate correctly. Normally people will create a VLAN separate to their interfaces NLB/subnet, to prevent floods mcast uncessisary frameworks within the network.

To answer your questions

(1) I saw multiple clients run this configuration

(2) the steps you are correct

(3) you can't toggle the on UCS IGMP snooping. It is enabled by default and not a configurable option. There is no need to change anything within the UCS regarding MS NLB with the above procedure. FYI - the ability to disable/enable the snooping IGMP on UCS is scheduled for a next version 2.1.

This is the correct method untill the time we have the option of configuring static multicast mac entries on

the Nexus 1000v. If this is a feature you'd like, please open a TAC case and request for bug CSCtb93725 to be linked to your SR.This will give more "push" to our develpment team to prioritize this request.

Hopefully some other customers can share their experience.

Regards,

Robert

-

How change 1010 Nexus and Nexus 1000v IP address

Hi Experts,

We run two VSM and a NAM in the Nexus 1010. The version of Nexus 1010 is 4.2.1.SP1.4. And the Nexus 1000v version is 4.0.4.SV1.3c. Now we need to change the IP address of management in the other. Where can I find the model SOP or config? And nothing I need to remember?

If it is only the mgmt0 you IP address change, you can simply enter the new address under the mgmt0 interface. It automatically syncs with the VC.

I guess you are trying to change the IP address of the VC and the management VLAN. One way to do this is:

-From the Nexus 1000v, disconnect the connection to the VC (connection svs-> without logging)

-Change the IP address of the VC and connect (connection-> remote ip address svs)

-Change the address for mgmt0 Nexus 1000v

-Change the mgmt VLAN on the 1010

-Change the address of the 1010 mgmt

-Reconnect the Nexus 1000v to VC (connection-> connect svs)

Therefore, to change the configuration of VLANS on the switch upstream, more connection to the VC as well.

Thank you

Shankar

-

Design/implementation of Nexus 1000V

Hi team,

I have a premium partner who is an ATP on Data Center Unified Computing. He has posted this question, I hope you can help me to provide the resolution.

I have questions about nexus 1KV design/implementation:

-How to migrate virtual switches often to vswitch0 (in each ESX server has 3 vswitches and the VMS installation wizard will only migrate vswicht0)? for example, to other vswitchs with other vlan... Please tell me how...

-With MUV (vmware update manager) can install modules of MEC in ESX servers? or install VEM manually on each ESX Server?

-Assuming VUM install all modules of MEC, MEC (vib package) version is automatically compatible with the version of vmware are?

-is the need to create port of PACKET-CONTROL groups in all THE esx servers before migrating to Nexus 1000? or only the VEM installation is enough?

-According to the manual Cisco VSM can participate in VMOTION, but, how?... What is the recommendation? When the primary virtual machines are moving, the secondary VSM take control? This is the case with connectivity to all virtual machines?

-When there are two clusters in a vmware vcenter, how to install/configure VSM?

-For the concepts of high availability, which is the best choice of design of nexus? in view of the characteristics of vmware (FT, DRS, VMOTION, Cluster)

-How to migrate port group existing Kernel to nexus iSCSI?... What are the steps? cisco manual "Migration from VMware to Cisco Nexus 1000V vSwitch" show how to generate the port profile, but

How to create iSCSI target? (ip address, the username/password)... where it is defined?

-Assuming that VEM licenses is not enough for all the ESX servers, ¿will happen to connectivity of your virtual machines on hosts without licenses VEM? can work with vmware vswitches?I have to install nexus 1000V in vmware with VDI plataform, with multiple ESX servers, with 3 vswitch on each ESX Server, with several machinne virtual running, two groups defined with active vmotion and DRS and the iSCSI storage Center

I have several manuals Cisco on nexus, but I see special attention in our facilities, migration options is not a broad question, you you have 'success stories' or customers experiences of implementation with migration with nexus?

Thank you in advance.

Jojo Santos

Cisco partner Helpline presales

Thanks for the questions of Jojo, but this question of type 1000v is better for the Nexus 1000v forum:

https://www.myciscocommunity.com/Community/products/nexus1000v

Answers online. I suggest you just go in a Guides began to acquire a solid understanding of database concepts & operations prior to deployment.

jojsanto wrote:

Hi Team,

I have a premium partner who is an ATP on Data Center Unified Computing. He posted this question, hopefully you can help me provide resolution.

I have questions about nexus 1KV design/implementation:

-How migrate virtual switchs distint to vswitch0 (in each ESX server has 3 vswitches and the installation wizard of VMS only migrate vswicht0)?? for example others vswitchs with others vlan.. please tell me how...

[Robert] After your initial installation you can easily migrate all VMs within the same vSwitch Port Group at the same time using the Network Migration Wizard. Simply go to Home - Inventory - Networking, right click on the 1000v DVS and select "Migrate Virtual Machine Networking..." Follow the wizard to select your Source (vSwitch Port Groups) & Destination DVS Port Profiles

-With VUM (vmware update manager) is possible install VEM modules in ESX Servers ??? or must install VEM manually in each ESX Server?

[Robert] As per the Getting Started & Installation guides, you can use either VUM or manual installation method for VEM software install.

-Supposing of VUM install all VEM modules, the VEM version (vib package) is automatically compatible with build existen vmware version?

[Robert] Yes. Assuming VMware has added all the latest VEM software to their online repository, VUM will be able to pull down & install the correct one automatically.

-is need to create PACKET-MANAGEMENT-CONTROL port groups in ALL esx servers before to migrate to Nexus 1000? or only VEM installation is enough???

[Robert] If you're planning on keeping the 1000v VSM on vSwitches (rather than migrating itself to the 1000v) then you'll need the Control/Mgmt/Packet port groups on each host you ever plan on running/hosting the VSM on. If you create the VSM port group on the 1000v DVS, then they will automatically exist on all hosts that are part of the DVS.

-According to the Cisco manuals VSM can participate in VMOTION, but, how? .. what is the recommendation?..when the primary VMS is moving, the secondary VSM take control?? that occurs with connectivity in all virtual machines?

[Robert] Since a VMotion does not really impact connectivity for a significant amount of time, the VSM can be easily VMotioned around even if its a single Standalone deployment. Just like you can vMotion vCenter (which manages the actual task) you can also Vmotion a standalone or redundant VSM without problems. No special considerations here other than usual VMotion pre-reqs.

-When there two clusters in one vmware vcenter, how must install/configure VSM?

[Robert] No different. The only consideration that changes "how" you install a VSM is a vCenter with multiple DanaCenters. VEM hosts can only connect to a VSM that reside within the same DC. Different clusters are not a problem.

-For High Availability concepts, wich is the best choices of design of nexus? considering vmware features (FT,DRS, VMOTION, Cluster)

[Robert] There are multiple "Best Practice" designs which discuss this in great detail. I've attached a draft doc one on this thread. A public one will be available in the coming month. Some points to consider is that you do not need FT. FT is still maturing, and since you can deploy redundany VSMs at no additional cost, there's no need for it. For DRS you'll want to create a DRS Rule to avoid ever hosting the Primar & Secondary VSM on the same host.

-How to migrate existent Kernel iSCSI port group to nexus? .. what are the steps? in cisco manual"Migration from VMware vSwitch to Cisco Nexus 1000V" show how to generate the port-profile, but

how to create the iSCSI target? (ip address, user/password) ..where is it defined?[Robert] You can migrate any VMKernel port from vCenter by selecting a host, go to the Networking Configuration - DVS and select Manage Virtual Adapters - Migrate Existing Virtual Adapter. Then follow the wizard. Before you do so, create the corresponding vEth Port Profile on your 1000v, assign appropriate VLAN etc. All VMKernel IPs are set within vCenter, 1000v is Layer 2 only, we don't assign Layer 3 addresses to virtual ports (other than Mgmt). All the rest of the iSCSI configuration is done via vCenter - Storage Adapters as usual (for Targets, CHAP Authentication etc)

-Supposing of the licences of VEM is not enough for all ESX servers,, ¿will happen to the connectivity of your virtual machines in hosts without VEM licences? ¿can operate with vmware vswitches?

[Robert] When a VEM comes online with the DVS, if there are not enough available licensses to license EVERY socket, the VEM will show as unlicensed. Without a license, the virtual ports will not come up. You should closely watch your licenses using the "show license usage" and "show license usage " for detailed allocation information. At any time a VEM can still utilize a vSwitch - with or without 1000v licenses, assuming you still have adapters attached to the vSwitches as uplinks.

I must install nexus 1000V in vmware plataform with VDI, with severals Servers ESX, with 3 vswitch on each ESX Server, with severals virtual machinne running, two clusters defined with vmotion and DRS active and central storage with iSCSI

I have severals cisco manuals about nexus, but i see special focus in installations topics, the options for migrations is not extensive item, ¿do you have "success stories" or customers experiences of implementation with migrations with nexus?

[Robert] Have a good look around the Nexus 1000v community Forum. Lots of stories and information you may find helpful.

Good luck!

-

[Nexus 1000v] Vincent can be add in VSM

Hi all

due to my lab, I have some problems with Nexus 1000V when VEM cannot add in VSM.

+ VSM has already installed on ESX 1 (stand-alone or ha) and you can see:

See the Cisco_N1KV module.

Status of Module Type mod Ports model

--- ----- -------------------------------- ------------------ ------------

1 active 0 virtual supervisor Module Nexus1000V *.

HW Sw mod

--- ---------------- ------------------------------------------------

1 4.2 (1) SV1(4a) 0.0

MOD-MAC-Address (es) series-Num

--- -------------------------------------- ----------

1 00-19-07-6c-5a-a8 na 00-19-07-6c-62-a8

Server IP mod-Server-UUID servername

--- --------------- ------------------------------------ -------------------

1 10.4.110.123 NA NA

+ on ESX2 installed VEM

[[email protected] / * / ~] status vem #.

VEM modules are loaded

Switch name Num used Ports configured Ports MTU rising ports

128 3 128 1500 vmnic0 vSwitch0

VEM Agent (vemdpa) is running

[[email protected] / * / ~] #.

all advice to do this.

Thank you very much

Doan,

Need more information.

The reception was added via vCenter to DVS 1000v successfully?

If so, there is probably a problem with your control communication VLAN between the MSM and VEM. Start here and ensure that the VIRTUAL local area network has been created on all intermediate switches and it is allowed on each end-to-end trunk.

If you're still stuck, paste your config running of your VSM.

Kind regards

Robert

-

Nexus 1000v and vmkernel ports

What is the best practice to put on the nexus 1000v vmkernel ports? Is it a good idea to put all the ports of vms and vmkernel on nexus 1000v? switch or dvs or some vmkernel as management ports must be on a standard? If something happens to the 1000v, all management and vms will be unreachable.

any tips?

Yep, that's correct. Port system profiles don't require any communication between the MEC and VSM.

Maybe you are looking for

-

I use a MacBook Pro 17 "and Airport utility recognizes the units Time Machine airport and Airport Express, I have in my network, but after awhile, he drops them and shows a yellow triangle that provides a message"device not found ". If I sign out and

-

Could not set the connection Wi - Fi on Satellite click mini L9W

I just news click on mini L9WWiFi connection was created without difficulty editing - it was later lost during the day, perhaps when the integrated anti virus has been activatedRe-established connection using Windows network & sharing Center - connec

-

How to remove/output port for test stand VI

OK - it's probably something stupid, but I can't really find a basic example to understand what the problem is. I just want to create a testbed VI, which in adition to the IO base, use additional IOs for the configuration, for example. Here is what I

-

Hello, I am looking for a lightweight sleeve for my W540 (without charger). As it has the "bump" battery 9 cells, I was wondering if anyone has any experience with the model. I prefer the leather, if possible. Thank you.

-

T3I - limits video - memory cards - 4 GB / 29: 59 limit?

I'm going to start using a T3i that I acquired recently to shoot videos of shorts I see that there is a limit of 4 GB or 29: 59 minutes before the camera stops the video It means that for each clip you use or total? As for example if I have a 32 GB c