effects of sampling frequency on the convolution of waveforms (need feedback from a GURU of signal processing)

I have included my code as version 8.5 for those who have not yet upgraded to 8.6. I have also included some screenshots so that you can replicate the results I got. I hope that some signal processing guru can shed light on what I mention it further.

This VI convolves the signal of impulse response of a simulated servomotor which is essentially a damped sine the input pulse which is a step function. The signal resulting convolved should be IDENTICAL to that of the step response of the engine which is RED on the display 1. As you can see the convolution that results in table 2 shows the same structure of frequency, but its magnitude is INCORRECT. As you can see in the catches of 2 screen sizes differ by a factor of 2 & done the sampling frequency of the wave. Why the sampling frequency, impact on the scale is also very strange & disturbing.

Would appreciate any corrections & explanations so that I trust the convolution of the other wave forms of entry than just the step function.

OK, I think I have it working now. Your premise on the effect of sampling on the derivative is not the issue. Does it affect what the FREQ of levy is the basis of time of convolution. As the convolution product is not continuous but discrete the length of the array should be taken into account & the sampling frequency must be consistent with this length of array as well as 1 second corresponds to 1 second. If sampling freq is 2 kHz & the length of the array is 1000 then to get the correct time base by a factor of 2 must be taken into account. In addition, to take account of the DC, shift of the ZERO gain factor must be added to the convolved signal to get the correct size.

Thanks for making me think more deeply.

Tags: NI Software

Similar Questions

-

Doubts about the sampling frequency when the production and acquisition

When the generation and acquisition of samples, the maximum sampling frequency is the maximum sampling frequency Council divided between the generation and acquisition of task number?

Thank you

Hi Houari,

You should read the specifications of your box DAQpad!

It is clearly said: entered analog = 200kS/s rate sampling, but analog output = sample rate of 300 s/s or even just 50 s/s for the hardware timing!

-

reading of the sampling frequency of the NI9862

Hi all

I use a DAQ chassis with modules 9205 (analog input) and 9862 (NOR-XNET CAN).

I have a program to synchronize the modules for acqustion based on the attached example. My question is how to determine the rate at which data comes the 9862?

It seems to be double the rate of the 9205 when I set the sampling rate for the 9205 to 500 Hz.

Is there a property node or a method that I can use to find the rate? I looked in the manual, and it gives no information.

Thank you

Griff

griff32,

Baud rate XNET CAN occur in your database. You can also check using a property node. In the example, in the XNET Session property node, you can develop, select the Interface > baud rate. You can do a right click on it and change it to read and son in an indicator.

Alternatively, you can write to this property node to replace the transmission speed in the database. Baud rate must be compatible with the speed of your network. It also has a max of 1 mbit/s. If you want both to acquire the same amount of data, I would recommend changes in the rate of the analog input task or samples to read through.

-

Sampling frequency for the output of an acquisition of data USB-6211 card?

Hello-

I use a CGI CMOS FireWire camera to read an interference figure, then using a transformed of Fourier transform spectral interferometery (FTSI) phase recovery simple algorithm to detect the relative phase between the successive shots. My camera has a linear 28 kHz scan rate, and I programmed my phase retrieval algorithm take ms ~0.7 (of a trigger of camera at the exit of the phase). I use the live signal to control a piezoelectric stack, by sending a voltage single sample to the analog output of a data USB-6211 acquisition card.

Send this output voltage increases the time of my loop 4 m, I would really like to achieve a 1 kHz or better sampling rate. Is the problem with my DAQ card or with the processor in my computer? The DAQ cards of NOR can support these speeds?

Thank you

-Mike Chini

Hey Mike,

With USB, your loop rate will be around or under 1 kHz, even on the best of the systems. USB has a higher latency and less determism PCI and PCIe. You can get rates AO one much better sample on a PCI card, potentially a PCI-6221. We have a few HAVE points of reference for targets of RT for PCI, / AO in a loop, you should be able to get similar performance in Windows, but if you do a lot other treatments may suffer from your local loop rates.

Hope this helps,

Andrew S

-

What is the relationship with the sampling frequency and number of samples per channel?

In my world, if I wanted to taste 10 seconds 10 Hz (100 s/ch), specify a rate of 10 and a number of samples of 100. This would take 10 seconds to return data. The task does not appear to behave this way. No matter what rate and the number of samples, I chose, I spammed with data at 1 Hz or more.

What I am doing wrong?

This problem is resolved by making a request for telephone assistance. It turns out that the minimum sampling frequency of the NI 9239 is 14xx s/s. I don't know why there is a minimum sampling frequency, but now I have to go to the next question discussed at this link:

-

Hello

In the code in the example attached, I create a task with a single channel of AI.

I get the maximum sampling frequency using DAQmxGetDevAIMaxSingleChanRate (or DAQmxGetDevAIMaxMultiChanRate), both return the same value of 1351 s/s.

When I try to configure the sample calendar using DAQmxCfgSampClkTiming at the maximum sampling frequency clock he does not accept the rate and returns the following error. Note that the error message shows 2 channels, even if only a channel has been added.

OUTPUT:

DAQmx error:

Sampling frequency is greater than the maximum sampling frequency for the number of specified channels.

Reduce the sampling frequency or the number of channels. The increase in the conversion rate or

reduce the time of the sample can also mitigate the problem, if you define one of them.

Number of channels: 2

Sampling rate: 1.351351e3

Maximum sampling frequency: 675.675676Why the device driver thinks I have 2 channels in the task, when a channel has been added?

Please find the code to reproduce this problem attached.

Kind regards

whemdan

The MathWorks

Hello w,

By default, the ENET/WLS/USB-9213 in NOR-DAQmx module has the AI. AutoZeroMode the value of the DAQmx_Val_EverySample property. This causes NOR-DAQmx acquire the channel of the internal path of the unit (_aignd_vs_aignd) on each sample to return more specific measures, even if the operating temperature of the device moves over time. If you need the sampling frequencies higher than this allows, you can call DAQmxSetAIAutoZeroMode(..., DAQmx_Val_Once) (who acquires the formatting string when you start the task) or DAQmxSetAIAutoZeroMode(..., DAQmx_Val_None) (which disables the setting entirely).

Note that for measures by thermocouple with cold junction compensation sensor of the 9213 NOR, NOR-DAQmx acquires channel built-in CJC (_cjtemp) on each sample as well, for the same reason.

Brad

-

Any problem of loop sampling frequency

Hello

I am a student who did a thesis on the electric vehicle tracking system in labview. I collection of usb-6009 analog measurement data, transfer it in the daq assistant and state machine inside a while loop to do the follow-up process.

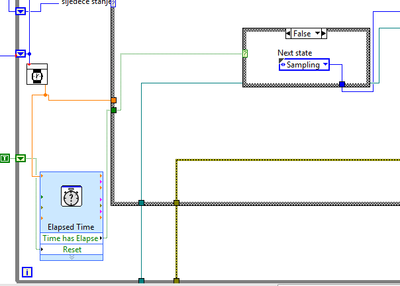

So, I have a State that determines the sampling frequency of the loop cycle, and I was able to modify this rate in the values of 0.1, 1 to 5 Hz, I realized that it with out of time VI Express, which is in a loop, but outside the case of structure for States. I'm going to rate last in time "Target Time" of entry and the Boolean output "Elapsed time" goes to the business structure, passing to the next state when the time runs out. In the next State, it resets the elapsed time and the cycle starts from the beginning.

Thus, everything works fine until I see in my log file (txt file where I save the measured values) it's only 3 or maximum 5 Hz frequency 4 samples per second.

I have a good enough computer with processor i7 and 8 GB of ram.

What could be the problem?

In the picture below it can be seen how I realized that with timer.

Thank you in advance.

Matej

As I mentioned in my previous note, your problem is that you start a new target for the time time vi ONCE your analog playback is completed. This ADDS essentially no matter what time is taken by Analog playback on top the time you ask vi of time spent waiting.

I modified your vi and I think that should take care of your problem of synchronization.

However, I suggest using "Wait until the next ms Multiple" because unlike the vi express you are using, one I mentioned allows other processes are doing their job in the meantime.

Hope that helps...

-DP

-

buterworth sampling frequency filter

Dear friends, hope it's a good day

I was wondering what is the best sampling frequency if the buterworth filter configured to. I know that some of you suggest trying different values from 1000, but I ask just for the guys in your experience.

Thank you very much.

Bill David,

It must be set to the sample rate of the data that you are breast-feeding to the filter. If you buy data from an analog to digital converter, use the sampling frequency of the converter.

I think that the default is to assume that each element of the input array is acquired to 1 second after the previous element.

Lynn

-

Sampling frequency of HAVE is incorrect for simulated ENET-9213, WLS-9213, and USB-9213

Hello

ENET-9213, WLS-9213 simulation and devices USB-9213, I'm able to correctly get the sampling frequency of I = 1351 samples/s using DAQmxGetDevAIMaxSingleChanRate, which is incidentally on the value of spec'ed of 1200 s/s.

However, when I create a task and add a voltage channel and then HAVE the sampling frequency of the task of query, I get a sampling rate of only 9 samples/s. I tried the same code with other devices and I get the sampling frequency corresponding to the device data sheet, it seems THST this problem is limited to 9213 devices.

Why sampling returned by the task using DAQmxGetSampClkMaxRate rate returns than 9 s/s.

And why the rate of conversion of DAQmxGetAIConvRate only 18 s/s.

I enclose the test code which may be used to reproduce this problem.

Kind regards

whemdan

The MathWorks

Hello

When I tried this with a USB-9213 simulation, I used the Sample clock Max Rate, as well as the Rate.vi of AIConvert:Max property node. I could see that for 1 channel, I could spend up to 675.67/s, and I couldn't for 16-channel get79.49S/s (which total is equal to 1271 S/s, which is in the specifications). The multichannel and single channel, I could get an AIConvert Max Rate of 1351.35.

Something that could happen is that you do not explicitly set this device runs in mode high speed. You'll want to set the property Get/Set/rest AI_ADCTimingMode channel at high speed, and you should see much better results in this way.

Something else to note - I use DAQmx 9.0

-

WLS-9163 and 9211, sampling frequency of evil?

Hello!

I have a WLS-9163 with 9211 mounted module. I have connected a single thermocouple type K to the analog input 0.

I can connect and perform measures wireless. However, I can make only 7 s/s without error message.

I get the following error message when I try to taste 14 s/s in the configuration of WLS-9163.Error-200081 occurred to the DAQ Assistant

Possible reasons:

Sampling frequency is greater than the maximum sampling frequency for the number of specified channels.

Reduce the sampling frequency or the number of channels. The increase in the conversion rate or reduce the period of the sample can also mitigate the problem, if you define one of them.

Number of channels: 2

Sampling rate: 14.000004

Maximum sampling frequency: 7.142857

Channel name: _WLS-14049AF-2/_cjtempWhen I use the 9211 in a cDAQ-9172 configuration I can acquire up to 14 s/s of a single channel with no problems at all.

Somehow he thinks that I chose to take measures to channels, that is not the case.

I use Labview 8.5.1 with hardware drivers of 2009-10 with Windows XP SP3.

Is this something you've heard before?

Best regards

MattiasMattias salvation,

Your task is also reading the CYC on the unit once for example, if you are done reading of two channels. Reading of the CCM (it's the .../_cjtemp in the error message) is required to return a value of temperature measured from a thermocouple.

Kind regards

Kyle

-

Different sampling rate with the same connector AIO, Labview FPGA

Hello

I use LV 2009 with the new Toolbox FPGA and an NI PXI 7854R. I acquire an analog signal with a sampling frequency of 600kS / s. I need as the sampling rate for the processing of the data, but I also need the signal sampled with a much smaller, variable sampling frequency to a FFT.

I've attached a picture to clarify, in a simple example, I'm looking for.

I tried with the structure case only take each ' iht iteration, but did not get the expected results.

Does anyone have another idea how to solve my problem? Of the, "Resampling" express VI in the funtion FPGA palette does not help me.

Thanks in advance,

Concerning

Hello

the connector for the analog input is a "shared resource", so you should he alone in your FPGA Code.

Find attached an example that shows how to perform this task of analysis.

Concerning

Ulrich

AE OR-CER

-

With audio files (in particular the WAV), Audio sampling frequency and the size of the Audio sample are not the choices available in the list of details with Vista. In earlier versions of Windows (2000 and XP) they were both selectable as details. Is it possible to get these will appear under Vista?

Vista - related audio details available:

Album

Album artist

Bit depth

Bitrate

Duration

Kind

Year2000 / XP - audio related details are available:

The album title

Artist

Audio sampling rate

Audio sample size

Bitrate

Kind

Title

The track number

YearFWIW, sampling frequencies are discussed in the Help window and how to (below).

Reference: http://windowshelp.microsoft.com/Windows/en-US/Help/53adb4c7-d538-42f8-bb13-917379922afe1033.mspx

Thank you!

For the third part of the applications that perform many tasks, I usually discover www.tucows.com and www.download.com. They have a wide variety of programs, and the trick is to put in the correct search terms to find what you are looking for. Make sure you that your selection is compatible with Windows Vista and at tucows, try to pick one with 4 or 5 cows because they are the highest rated.

Good luck! Lorien - a - MCSE/MCSA/network + / A +.

-

Sampling frequency of input/output does not match.

Hi all

I recently moved to audacity to audition and I have a small question: I have a Zoom h2n who worked well with Audacity, but when I tried my first recording with audition, I was told that my input and output sample rates do not match. I've already looked around and discovered that I need to change the format of my speakers to match the entrance to 44.1 I my h2n zoom fixed to however I was wondering if there was when even just ignore it. What the output is important in any case, my fault for my speakers is quality studio 24 bit 48000. Did I miss an option in the settings of the hearing? What I really need to drop out of the enclosure to 16 bit, 44100?

Thanks in advance for any help.

Peace.

If you use the Zoom as an interface to audition then you really must match your session, the sampling frequency of the aufdition otherwise interface is going to be on-the-fly resampling

So when you create a new session you assign at the same rate as the Zoom or vice versa

You can also try to edit > Preferences > Audio hardware and check or uncheck the "attempt to force material to document sampling frequency.

-

Get the value of the frequency of the power spectrum

I'm rather new to LabView and want to measure the frequency of the peak in a spectrum of power of a real signal. In addition, I want this value of frequency and amplitude to save to a file. Right now I am able to trace a spectrum of power using an express VI, which gives me the correct frequency value in the graph.

However, I'm not able to extract the value of the frequency with different screws, I found in LabView after browsing through the various discussions in this forum. Can someone tell me please in the right direction? I use a digitizer NI PXI-5124 to record the signal in a rack of NOR.

If it is the dominant frequency you are looking for you can use the vi extracted a single signal. You can also change this vi to include the details of the search if it isn't the dominant frequency, I have not included it in my example, but you can check it in the help file.

Ian

-

Discordance between the DAQ rate Wizard and the dt output waveform

I use a NI 9239 to acquire analog input data. Using the DAQ Assistant, I put the N samples, 8 k samples read acquisition mode and the frequency of acquisition of 8 k. I wire the data output of the DAQ Assistant directly to an indicator of waveform.

I expect the dt for the waveform to be 0.000125. I have 0.000120, which is a rate of acquisition of 8333,333 Hz.

What I am doing wrong?

Thank you.

To read the 9239 specifications in order to answer your question. Focus on page 18/19.

Bonus tip:

You specify the MINIMUM for acquiring sampling frequency in the DAQ Assistant.

hope this helps,

Norbert

Maybe you are looking for

-

Hello world I noticed that on the set of continuous, manual shoots at only half the speed of the sport mode on my 1100D (Rebel T3). I disabled all the features of treatment such as the correction of iso noise high and optimizer of lighting, etc.. I

-

Roxio 2011 pro installs but won't launch

Roxio 2011 Pro will install from the disk, but will not undertake except a partial window?

-

Print from a smartphone Android or iOs

I have a Hp C7283 Photosmart-all-in-one printer on my home WiFi network. Is it possible to print from a computer Pocket iOS or Android? I understand that it is mostly a problem of driver. Thanks for a solution (if any) S. stef

-

OK, I changed my hard reversing in car and the windows don't will not activate because of it, how or who should I call to fix this *... once again!

-

Connection is de-energized when (not if) away from the router

Hello you all fine technical staff. My name is Jake and I have a technical problem. So yes, the connection is either super slow or de-energized when I'm in my room. I am writing to you in the room next to the router and everything works A OK. But I'm